Over two-thirds of students use AI for information searching. The HEPI survey found that explaining concepts (58%), summarizing articles (48%), and suggesting research ideas (41%) are the top use cases. Students treat AI as an on-demand tutor that can clarify difficult material outside classroom hours.

Essay Writing and Content Creation

Among students who use AI, 89% apply it to homework, 53% to essays, and 24% specifically to generate first drafts, according to BestColleges. Common applications include brainstorming, outlining, grammar checking, and paraphrasing.

Most students are not submitting fully AI-written work. The HEPI survey found only 18% of students include AI-generated text directly in their assignments. The majority use AI to assist their writing process, not replace it.

Cornell research on college application essays found that AI-generated text tends to be generic and formulaic. Students who rely on AI as a ghostwriter may actually disadvantage themselves — admissions professionals can often spot the uniform style AI produces.

Assessment Preparation

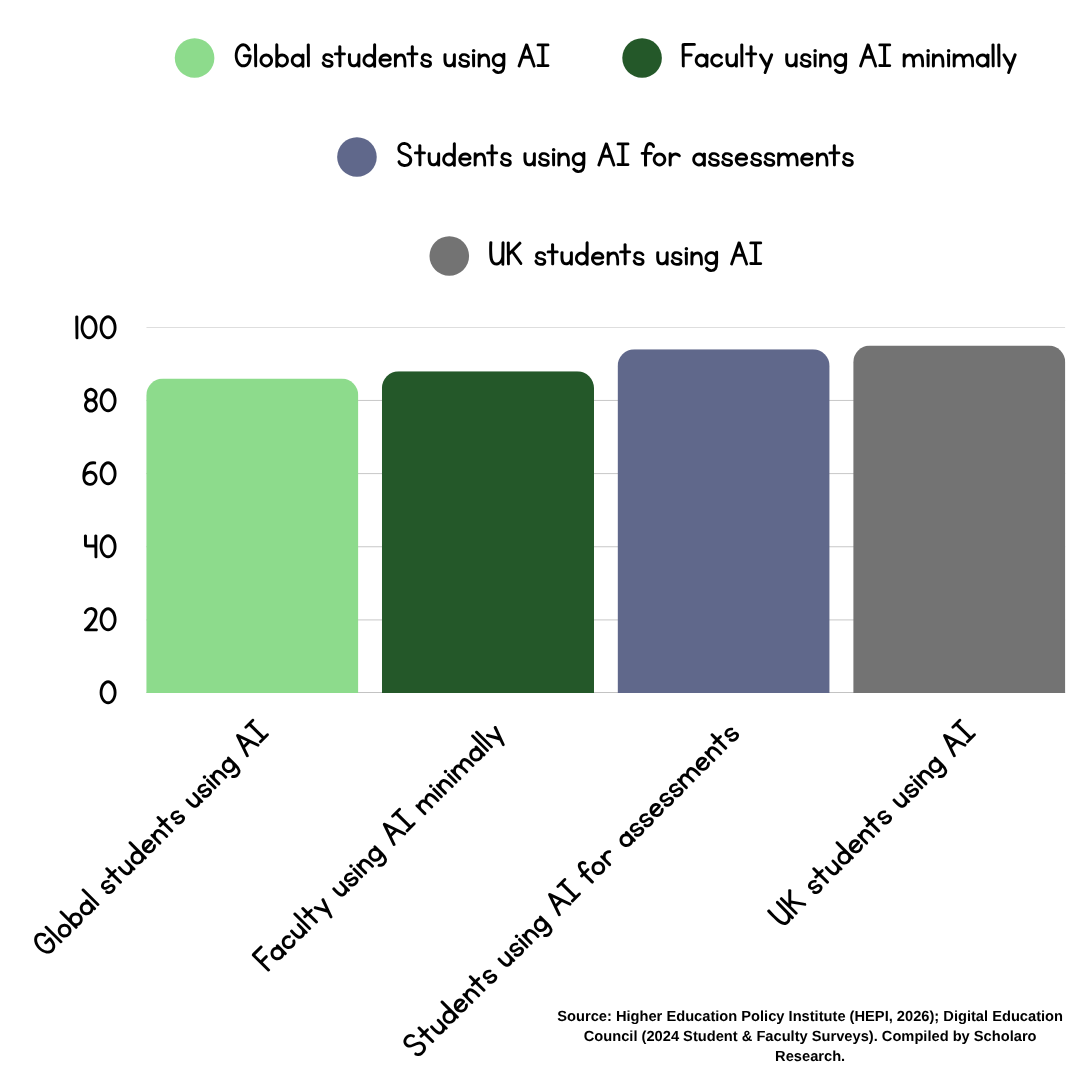

Student use of AI for assessments has grown sharply. HEPI data shows 88% of students now use generative AI for assessments, up from 53% in 2024. Nearly half (48%) use AI for at-home tests. Common applications include generating study guides, creating practice problems, and reviewing material before exams.

The Turnitin/Vanson Bourne survey of 3,500 respondents across six countries found that 70% of students use AI at least occasionally for assignments. However, 50% say they do not know how to get the most benefit from it — which signals an opportunity for institutional guidance.

Graduate-Level Research

At the doctoral level, AI applications extend into literature reviews, cross-source synthesis, data analysis, citation management, and manuscript feedback. These uses can accelerate research but raise questions about competency development that institutions should address in graduate training.

AI Cheating: What the Data Shows

Measuring Misconduct Is Complicated

How many students use AI to cheat depends on how you define cheating. Survey data reveals divided views. Approximately 51–54% of students believe using AI on assignments constitutes cheating, according to BestColleges. Yet 22% admit to using AI even while believing it is inappropriate.

More concrete indicators come from detection and disciplinary data:

Turnitin (late 2024): Out of 280 million papers reviewed since April 2023, over 9.9 million were flagged as containing at least 80% AI writing. The vast majority of papers did not show high levels of AI content.

UK misconduct cases: Freedom of Information data obtained by The Guardian found nearly 7,000 UK university students were formally caught cheating with AI in 2023–24 — 5.1 cases per 1,000 students, triple the rate from the prior year.

Direct admission: The HEPI survey found 18% of UK undergraduates admit to submitting AI-generated text in assignments.

These figures warrant attention, but context matters. A tripling of misconduct cases may reflect improved detection as much as increased cheating. And while 18% submitting AI text is concerning, the substantial majority are not doing so.

The Detection Challenge

Approximately 68% of faculty now use AI detection tools, up from 38% in 2022–23, according to educator surveys. Turnitin claims less than 1% false positive rate for documents with over 20% AI-generated content.

Detection accuracy is contested. Independent analyses report higher false positive rates in practical use (2–5%). A University of Reading study found 94% of AI-generated exam answers went undetected in a controlled test. Faculty confidence in detection is modest: only 54% feel effective at identifying AI content.

Detection tools have also shown elevated false positive rates for multilingual learners. Princeton and MIT have advised against relying solely on AI detectors due to reliability and bias concerns, as noted in AllAboutAI analysis.

The Broader Picture

A ScienceDirect study analyzing high school cheating behaviors before and after ChatGPT found overall cheating remained relatively stable. Students may be switching methods rather than increasing total dishonesty.

Large majorities believe AI should not write entire papers. The HEPI survey found 53% of students say fear of being accused of cheating is the main factor putting them off AI use. Deterrents appear to be working — but they may also discourage legitimate use.

Student AI Literacy: The Preparation Problem

Despite high usage, students recognize gaps in their capabilities. The DEC survey found 58% feel they lack sufficient AI knowledge and skills. Nearly half (48%) do not feel adequately prepared for an AI-enabled workplace.

The Turnitin survey found 67% of students believe AI proficiency will improve their employability. Yet 59% worry AI could reduce their critical thinking skills, and 49% worry about becoming over-reliant on AI tools. Students are using technology they do not fully understand while recognizing the risks.

Faculty Are Also Struggling

The DEC Faculty Survey, which gathered 1,681 responses from 52 institutions across 28 countries, found that while 61% of faculty have used AI in teaching, 88% do so minimally.

Forty percent of faculty say they are just beginning their AI literacy journey. Only 17% rate themselves at an advanced or expert level. Eighty percent report a lack of clarity on how AI can be applied in teaching. Only 4% are fully aware of their institutional AI guidelines and consider them comprehensive.

This creates inconsistent student experiences. The HEPI survey found only 29% of students agree their institution encourages them to use AI, while 40% disagree. The proportion saying university staff are well-equipped to work with AI has improved (42% in 2025, up from 18% in 2024), but significant gaps remain.

What Students Want

Students are not asking institutions to ban AI. They want greater integration in teaching, formal training on AI tools, involvement in policy development, and clear guidance on acceptable use.

Building a Framework for Responsible AI Use

Establishing Clear Policies

UNESCO reports that only 10% of schools and universities have established guidelines for AI use. This policy vacuum creates confusion for students and faculty alike.

Effective policies should:

Acknowledge both benefits and risks of AI rather than treating it as purely threatening

Specify acceptable use with clear categories — many institutions adopt a three-tier model: no AI permitted, AI assistance with disclosure, or open AI use with attribution

Reinforce core academic integrity values: honesty, trust, fairness, respect, responsibility

Address data privacy and security, particularly when students input sensitive information into AI tools

Provide for equitable access across student populations

Include mechanisms for regular policy review as technology evolves

The U.S. Department of Education issued guidance in 2025 affirming that responsible AI use in education is permissible under federal programs and outlining key principles for integration.

Practical Classroom Strategies

Faculty who redesign assignments to make the writing process visible report fewer integrity issues. Scaffolded assignments requiring outlines, drafts, and reflections make it difficult to substitute AI-generated work for genuine engagement.

Assessment redesign matters too. Assignments that AI cannot easily complete — personal reflection, in-class demonstration, or authentic application to local contexts — reduce both temptation and feasibility of misuse. Research suggests institutions implementing assessment redesign see 40% fewer issues compared to detection-only approaches.

The HEPI report recommends institutions "stress-test" their assessments to check whether AI can easily complete them. That is a practical first step.

Teaching AI Literacy Directly

Forward-thinking institutions teach students:

How AI generates content and its underlying limitations

How to identify bias and inaccuracy in AI outputs

How to verify AI-generated information against reliable sources

When AI use is appropriate versus inappropriate

How to properly disclose and cite AI assistance

This treats AI literacy as an essential competency rather than a problem to police. The World Economic Forum has articulated seven principles for responsible AI in education that provide useful guidance for institutions developing literacy programs.

Strategic Implications for University Leadership

Governance Priorities

University leadership should prioritize developing or updating institution-wide AI guidelines. Current awareness is dangerously low. Communicate policies clearly to both students and faculty, and build mechanisms for regular review.

The DEC Faculty Survey found 83% of faculty are concerned about students' ability to critically evaluate AI outputs. Addressing this requires systematic investment, not ad hoc responses.

Faculty Development

Faculty cannot guide students without support. Provide training and resources that help faculty understand AI tools, redesign assessments, and apply consistent standards. Create structured forums for sharing strategies across disciplines.

Equity and Infrastructure

Access to AI tools is uneven. Evaluate whether disparities are affecting educational equity and consider whether institutional provision of AI tools might address gaps. Balance innovation with data security and privacy protections.

Workforce Preparation

Recognize AI literacy as an essential competency alongside traditional academic skills. Sixty-seven percent of students believe AI proficiency will improve their employability, according to Turnitin research. Curricula should reflect this. Partner with industry to understand evolving skill requirements.

Conclusion

AI is already embedded in student academic life. The question for university leadership is not whether to engage but how.

The data shows students using AI broadly and with increasing sophistication — to explain concepts, summarize readings, brainstorm ideas, and improve their writing. Most are not using it to cheat. But they lack confidence and formal guidance, and they are asking institutions for leadership.

Institutions that establish clear policies, invest in literacy for students and faculty, and redesign pedagogy for the AI era will be better positioned to preserve academic integrity while preparing students for careers where AI fluency is expected.

A reactive posture focused primarily on detection and punishment is unlikely to succeed. Students are already adapting. The institutions that shape this transformation, rather than resist it, will set the standard for years to come.

How Excessive Phone Use Hurts Academic Performance in College Students

How Excessive Phone Use Hurts Academic Performance in College Students

Why Employers Care More About Tech Skills Than Your Degree in 2026

Why Employers Care More About Tech Skills Than Your Degree in 2026

Education vs Experience: Why Professional Experience Is Harder to Measure

Education vs Experience: Why Professional Experience Is Harder to Measure

Choosing Between Online and Classroom Learning? Here’s What Affects Your Future

Choosing Between Online and Classroom Learning? Here’s What Affects Your Future

Rethinking Online Learning Strategy: What Today’s Students Demand

Rethinking Online Learning Strategy: What Today’s Students Demand