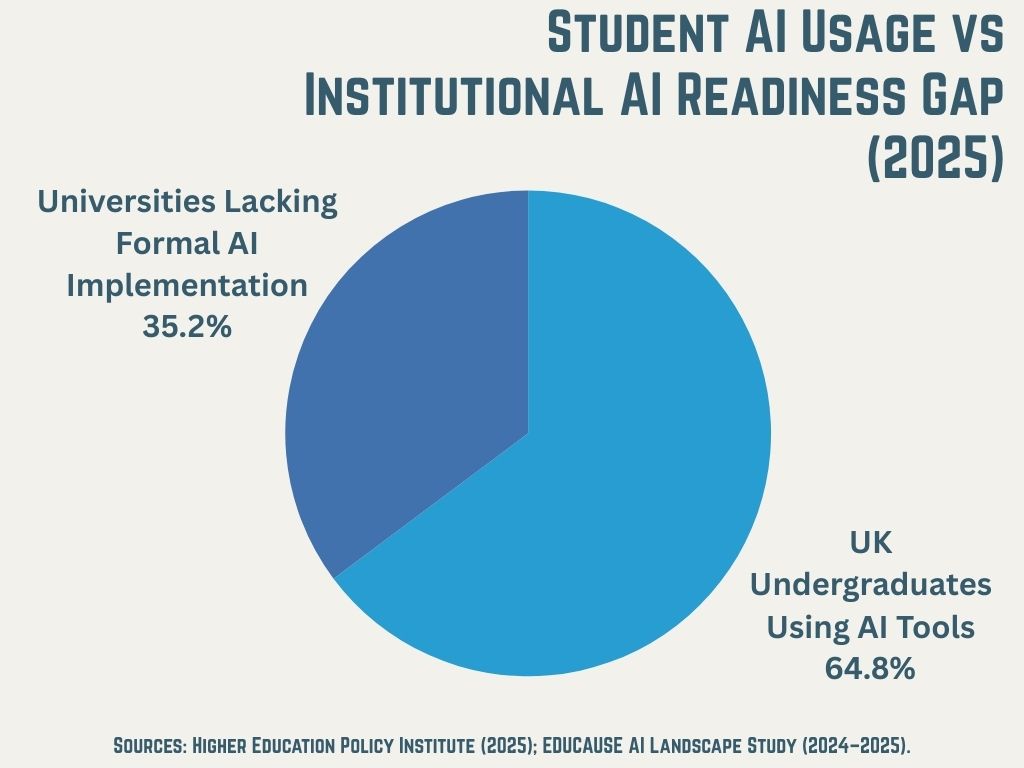

More than half of universities still lack formal AI implementation, according to an EdTech Connect analysis of higher education. Yet the Higher Education Policy Institute found that 92% of UK undergraduates use AI tools in their academic work. This gap between institutional readiness and student behavior defines the challenge facing university leadership today.

The question is no longer whether to adopt large language models. It is how fast and how well you can integrate them across teaching, research, and operations. Regulatory pressure adds urgency. The European Union's AI Act now imposes transparency requirements on institutions with European students or partnerships. U.S. guidance remains fragmented, leaving institutional discretion as both freedom and burden.

This article provides a strategic framework for assessing LLM adoption across six areas: strategy development, implementation models, AI literacy education, policy creation, student-centered approaches, and talent acquisition.

1. Building a Coordinated AI and LLM Strategy

Most institutions have responded to generative AI with scattered initiatives. Individual departments purchase tools. Faculty create their own policies. IT fields requests without clear priorities. This ad-hoc approach fails for predictable reasons.

Silos create inconsistent policies and duplicated costs. Your business school may license one platform while engineering uses another. Neither talks to your LMS. Faculty and staff develop workarounds without institutional guidance, increasing compliance and reputational risk.

A coordinated strategy requires four elements.

Vision alignment. Connect LLM adoption to your existing institutional mission. If you prioritize research excellence, start there. If student success metrics drive your board conversations, begin with retention and support applications. AI strategy should serve institutional strategy, not exist beside it.

Governance structure. Establish a cross-functional AI steering committee. The Association of Pacific Rim Universities recommends including representatives from academic affairs, IT, legal and compliance, student affairs, and faculty governance in its whitepaper on generative AI. Without this breadth, you optimize for one constituency while creating problems for others.

Phased roadmap. Start with low-risk, high-impact pilots. Administrative automation and research assistance carry less institutional risk than assessment and grading. Learn from these before scaling to teaching applications where stakes are higher.

Budget realism. Account for licensing, infrastructure, training, and ongoing evaluation. Enterprise AI platforms cost $20 to $30 per user monthly. Multiply by your faculty, staff, and student population. Add implementation and training costs. Many institutions underestimate total cost of ownership significantly.

Assessment Questions

Do you have a written AI/LLM strategic plan?

Is there clear ownership and accountability for AI initiatives?

Are your timelines realistic given current capacity and budget?

2. Current Implementation Models Across Higher Education

Universities are deploying LLMs across four primary domains.

Research support. LLMs now assist with literature reviews, data analysis, and interdisciplinary collaboration. A July 2025 analysis in The Conversation noted that LLMs show strong potential in biological sciences, chemical sciences, engineering, and social sciences research. This application carries relatively low risk and high value, making it a common starting point.

Administrative operations. Enrollment management, student services chatbots, HR processes, and financial aid inquiries all benefit from AI automation. These back-office applications free staff for higher-value work and often deliver measurable ROI within months.

Teaching and learning. Course design assistance, personalized feedback, and AI tutoring agents represent the fastest-growing category. Indiana University's Kelley School of Business found students using Microsoft Copilot improved performance by 10% and reduced task completion time by 40%, according to research cited in a Microsoft-sponsored IDC white paper.

Cybersecurity. Oregon State University, Auburn University, and Singapore Management University use AI-powered threat detection in their security operations centers. This approach addresses staffing shortages while giving students hands-on experience.

Platform Considerations

Major platforms include OpenAI (ChatGPT), Anthropic (Claude), Google (Gemini), Microsoft (Copilot), and open-source options like Meta's Llama. Evaluate them on data privacy requirements, integration with existing systems, enterprise support, and cost structures.

Georgia Tech's AI strategy partners with Microsoft, OpenAI, and NVIDIA simultaneously. This multi-vendor approach avoids lock-in and lets the institution match tools to use cases. Your contracts should preserve flexibility to switch providers as the market evolves.

Assessment Questions

Which domains have you prioritized for LLM implementation?

Have you evaluated multiple platforms against your specific requirements?

Do your vendor contracts allow flexibility as technology changes?

3. Structuring AI Literacy and Deep Learning Education

The Cengage Group's 2025 AI in Education Report found that 65% of higher ed students believe they know more about AI than their instructors. Whether accurate or not, this perception undermines faculty authority and creates friction in the classroom.

Faculty development must become the foundation of your AI education strategy. This means embedding AI training into professional development as a requirement, not an optional workshop. Vanderbilt University's Institute for the Advancement of Higher Education offers an online resource hub helping educators understand generative AI in course design. Your faculty need similar support tailored to your institutional context.

Curriculum Integration Models

You have three options for integrating AI into curriculum.

AI as subject. Dedicated courses in artificial intelligence, machine learning, and data science. These serve students pursuing technical careers but reach a limited population.

AI as tool. Integration into existing courses across disciplines. Writing courses teach AI-assisted revision. Research methods courses cover AI literature review. Design courses use generative tools for prototyping. This approach reaches more students but requires broader faculty buy-in.

AI as literacy requirement. Baseline competency for all graduates regardless of major. This treats AI fluency like writing or quantitative reasoning. Few institutions have implemented this yet, but workforce expectations are pushing in this direction.

Research Training

Graduate and postdoctoral researchers need specific guidance. The University of Alberta collaborated with peer institutions including the University of Manitoba and Vancouver Island University to develop guidelines for AI in graduate research. These cover AI use in literature synthesis, data analysis, writing, and questions of co-authorship. Your graduate programs should address these issues explicitly rather than leaving students to guess.

Assessment Questions

Does your faculty development program address AI fluency?

Are curriculum changes keeping pace with workforce expectations?

Do graduate programs have clear AI use guidelines?

4. Establishing Generative AI Policies That Work

Policy approaches fall on a spectrum. Columbia University's Office of the Provost released a university-wide generative AI policy that requires explicit permission for AI use. Other institutions permit AI unless explicitly prohibited. Most top-ranked universities have landed on a tiered approach where policies vary by course, assignment type, or department.

The tiered model reflects reality. A creative writing seminar has different concerns than a data science course. Blanket policies either restrict beneficial uses or fail to protect against misuse.

Core Policy Components

Your policy should address six areas.

Scope. Define who is covered: students, faculty, staff, researchers, or all. Many early policies focused only on students and left faculty use unaddressed.

Permitted uses. Create clear categories. Research support, administrative tasks, and brainstorming may be broadly permitted. Assessment and grading may require restrictions.

Prohibited uses. Specify what constitutes academic integrity violations. The Duke Center for Teaching and Learning notes that unauthorized AI use is treated as cheating under the Duke Community Standard, giving instructors discretion to define permitted use.

Disclosure requirements. When and how must AI use be acknowledged? Some institutions require conversation transcripts as appendices. Others accept a simple statement.

Data handling. What information can and cannot be entered into AI tools? Student data, research data, and proprietary institutional information may require different treatment.

Enforcement. Align consequences with existing academic integrity processes. New violations should not require new adjudication systems.

Regulatory Context

The European Union's AI Act creates transparency requirements and risk categorization affecting institutions with European students or partnerships. If you operate study abroad programs, have EU-based online students, or partner with European institutions, these rules apply to you.

The U.S. Department of Education has issued guidance emphasizing institutional discretion rather than federal mandates. State-level guidance varies, with some states developing AI-specific education frameworks. Monitor your accreditation bodies for emerging standards on AI in assessment.

A Frontiers in Education study examining AI policy frameworks found that institutions need regular evaluation and refinement of their guidelines based on continuous feedback and real-world experience.

Policy Development Process

Include student voice in policy creation. Most 2023 and 2024 policies were developed without meaningful student input. Build in annual review cycles at minimum. Communicate policies through multiple channels repeatedly.

Assessment Questions

Are your policies clear, accessible, and consistently enforced?

Do policies address both academic and administrative AI use?

Is there a review mechanism to update policies as technology evolves?

5. Centering Students in AI Strategy

Students are already using these tools extensively. The Higher Education Policy Institute's 2025 survey found AI usage for assessment preparation jumped from 53% to 88% in one year. U.S. data shows similar patterns.

Students use AI primarily for explaining concepts, assessment preparation, brainstorming, and research. The Cengage Group report found that 45% wish their professors taught AI skills in relevant courses. They want to learn responsible AI use, not just be told what is banned.

Equity and Access

Premium AI tools create advantage gaps. Students who can afford $20 monthly subscriptions access capabilities that free tiers do not offer. Institutional licensing can level this playing field. Consider also device and connectivity requirements. AI-enabled learning assumes reliable internet access and capable hardware.

Student Success Applications

AI can strengthen student support infrastructure. Early warning systems identify at-risk students by analyzing engagement patterns. Personalized learning pathways adapt to individual progress. Chatbots extend help beyond office hours. Career services integration provides resume feedback and interview preparation.

An EDUCAUSE Review analysis describes how institutions are building data-informed, student-centered ecosystems by combining AI analytics with library resources and success center coaching.

Guarding Against Harm

Over-reliance on AI may weaken critical thinking and foundational skills. Mental health considerations also matter. AI should supplement, not replace, human connection. Clear boundaries between AI support and human mentorship protect the student experience.

Assessment Questions

Do students have equitable access to AI tools?

Are student perspectives included in AI governance?

Is there support infrastructure to help students use AI productively?

6. Recruiting and Retaining AI Expertise

PhD-level AI talent remains scarce. Veris Insights research on AI hiring notes that AI job postings on LinkedIn jumped 50% while the supply of new AI PhDs amounts to only a few hundred annually. Competition from tech companies offering higher salaries makes university hiring harder.

You compete as both an employer and a talent pipeline. Your best AI students have offers from companies that can double or triple academic salaries.

Strategic Hiring Approaches

Consider four models.

New leadership roles. Chief AI Officer positions provide executive-level coordination. George Mason University appointed its first Chief AI Officer to oversee strategy across education and research. This role signals institutional commitment and creates accountability.

Faculty lines. Dedicated AI faculty should exist across departments, not just in computer science. Business, health sciences, humanities, and social sciences all need faculty who can connect AI to disciplinary applications.

Staff expertise. Data engineers, AI trainers, and instructional designers with AI fluency support faculty and students. These roles often go unfilled because institutions do not recognize the need until projects stall.

Hybrid models. Fractional or consulting arrangements address specialized needs without full-time commitments. This works for implementation projects with defined timelines.

Retention and Development

Create research environments that appeal to AI talent. Autonomy, cross-functional collaboration, and publication opportunities matter more to many researchers than salary alone. Build internal pipelines by upskilling existing staff. Partner with industry for co-research opportunities that benefit both parties.

Assessment Questions

Do you have dedicated AI leadership at the executive level?

Is your compensation competitive for technical AI roles?

Are you developing internal AI expertise or relying solely on external hires?

The Readiness Question

LLM adoption is a strategic imperative, not a technology project. It touches teaching, research, operations, student experience, workforce preparation, and institutional reputation.

Use the assessment questions throughout this article as a diagnostic. Where you answer "no" or "I don't know," you have found your starting points.

Speed matters, but so does intentionality. Institutions that rush into adoption without governance structures create problems they will spend years fixing. Institutions that wait for perfect clarity will find themselves increasingly distant from student expectations and workforce realities.

Conduct an institutional AI readiness assessment within the next quarter. Identify gaps. Assign ownership. Set timelines. The institutions that act now will hold advantages in enrollment, research funding, and operational performance for years to come.

Choosing Between Online and Classroom Learning? Here’s What Affects Your Future

Choosing Between Online and Classroom Learning? Here’s What Affects Your Future

Rethinking Online Learning Strategy: What Today’s Students Demand

Rethinking Online Learning Strategy: What Today’s Students Demand

Career Shock vs Culture Shock: What Shapes the First Job Abroad

Career Shock vs Culture Shock: What Shapes the First Job Abroad

Best Platforms for Video-Based Learning on Mobile in 2026

Best Platforms for Video-Based Learning on Mobile in 2026

How Student Expectations Are Forcing U.S. Universities to Rethink Tech Strategy in 2026

How Student Expectations Are Forcing U.S. Universities to Rethink Tech Strategy in 2026